Monthly Archives: June 2009

I’m thinking about using Amazon, IBM, or Rackspace…

At Gartner, much of our coverage of the cloud system infrastructure services market (i.e., Amazon, GoGrid, Joyent, etc.) is an outgrowth of our coverage of the hosting market. Hosting is certainly not the only common use case for cloud, but it is the use case that is driving much of the revenue right now, a high percentage of the providers are hosters, and most of the offerings lean heavily in this direction.

This leads to some interesting phenomenons, like the inquiries where the client begins with, “I’m considering using Amazon, IBM, or Rackspace…” That’s the result of customers thinking about the trade-offs between different types of solutions, not just vendors. Also, ultimately, customers buy solutions to business needs, not technology.

Customers say things like, “I’ve got an e-commerce website that uses the following list of technologies. I get a lot more traffic around Mother’s Day and Christmas. Also, I run marketing campaigns, but I’m never sure how much additional traffic an advertisement will drive to my site.”

If you’re currently soaking in the cloud hype, you might quickly jump on that to say, “A perfect case for cloud!” and it could be, but then you get into other questions. Is maximum cost savings the most important budgetary aspect, or is predictability of the bill more important? When he has traffic spikes, are they gradual, giving him hours (or even days) to build up the necessary capacity, or are they sudden, requiring provisioning in close to real time as possible? Does he understand how to architect the infrastructure (and app!) to scale, or does he need help? Does his application scale horizontally or vertically? Does he want to do capacity planning himself, or does he want someone else to take care of it? (Capacity planning equals budget planning, so it’s rarely an, “eh, because we can scale quickly, it doesn’t matter.”) Does he have a good change management process, or does he want a provider to shepherd that for him? Does he need to be PCI compliant, and if so, how does he plan to achieve that? How much systems management does he want to do himself, and to what degree does he have automation tools, or want to use provider-supplied automation? And so on.

That’s just one of the use cases for cloud compute as a service. Similar sets of questions exist in each of the other use cases where cloud is a possible solution. It’s definitely not as simple as “more efficient utilization of infrastructure equals Win”.

Does Procurement know what you care about?

In many enterprises, IT folks decide what they want to buy and who they want to buy it from, but Procurement negotiates the contract, manages the relationship, and has significant influence on renewals. Right now, especially, purchasing folks have a lot of influence, because they’re often now the ones who go out and shop for alternatives that might be cheaper, forcing IT into the position of having to consider competitive bids.

A significant percentage of enterprise seatholders who use industry advisory firms have inquiry access for their Procurement group, so I routinely talk to people who work in purchasing. Even the ones who are dedicated to an IT procurement function tend not to have more than a minimal understanding of technology. Moreover, when it comes to renewals, they often have no thorough understanding of what exactly it is that the business is actually trying to buy.

Increasingly, though, procurement is self-educating via the Internet. I’ve been seeing this a bit in relationship to the cloud (although there, the big waves are being made by business leadership, especially the CEO and CFO, reading about cloud in the press and online, more so than Purchasing), and a whole lot in the CDN market, where things like Dan Rayburn’s blog posts on CDN pricing provide some open guidance on market pricing. Bereft of context, and armed with just enough knowledge to be dangerous, purchasing folks looking across a market for the cheapest place to source something, can arrive at incorrect conclusions about what IT is really trying to source, and misjudge how much negotiating leverage they’ll really have with a vendor.

The larger the organization gets, the greater the disconnect between IT decision-makers and the actual sourcing folks. In markets where commoditization is extant or in process, vendors have to keep that in mind, and IT buyers need to make sure that the actual procurement staff has enough information to make good negotiation decisions, especially if there are any non-commodity aspects that are important to the buyer.

How not to use a Magic Quadrant

The Web hosting Magic Quadrant is currently in editing, the culmination of a six-month process (despite my strenuous efforts to keep it to four months). Many, many client conversations, reference calls, and vendor discussions later, we arrive at the demonstration of a constant challenge: the user tendency to misinterpret the Magic Quadrant, and the correlating vendor tendency to become obsessive about which quadrant they’re placed in.

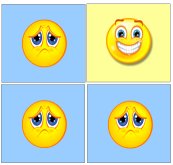

Even though Gartner has an extensive explanation of the Magic Quadrant methodology on our website, vendors and users alike tend to oversimplify what it means. So a complex methodology ends up translating down to something like this:

But the MQ isn’t intended to be used this way. Just because a vendor isn’t listed as a Leader doesn’t mean that they suck. It doesn’t mean that they don’t have enterprise clients, that those clients don’t like them, that their product sucks, that they don’t routinely beat out Leaders for business, or, most importantly, that we wouldn’t recommend them or that you shouldn’t use them.

The MQ reflects the overall position of a vendor within an entire market. An MQ leader tends to do well at a broad selection of products/services within that market, but is not necessarily the best at any particular product/service within that market. And even the vendor who is typically best at something might not be the right vendor for you, especially if your profile or use case deviates significantly from the “typical”.

I recognize, of course, that one of the reasons that people look at visual tools like the MQ is that they want to rapidly cull down the number of vendors in the market, in order to make a short-list. I’m not naive about the fact that users will say things like, “We will only use Leaders” or “We won’t use a Niche Player”. However, this is explicitly what the MQ is not designed to do. It’s incredibly important to match your needs to what a vendor is good at, and you have to read the text of the MQ in order to understand that. Also, there may be vendors who are too small or too need-specific to have qualified to be on the MQ, who shouldn’t be overlooked.

Also, an MQ reflects only a tiny percentage of what an analyst actually knows about the vendor. Its beauty is that it reduces a ton of quantified specific ratings (nearly 5 dozen, in the case of my upcoming MQ) to a point on a graph, and a pile of qualitative data to somewhere between six and ten one-or-two-sentence bullet points about a vendor. It’s convenient reference material that’s produced by an exhaustive (and exhausting) process, but it’s not necessarily the best medium for expressing an analyst’s nuanced opinions about a vendor.

I say this in advance of the Web hosting MQ’s release: In general, the greater the breadth of your needs, or the more mainstream they are, the more likely it is that an MQ’s ratings are going to reflect your evaluation of the vendors. Vendors who specialize in just a single use case, like most of the emerging cloud vendors, have market placements that reflect that specialization, although they may serve that specific use case better than vendors who have broader product portfolios.

ICANN and DNS

ICANN has been on the soapbox on the topic of DNS recently, encouraging DNSSEC adoption, and taking a stand against top-level domain (TLD) redirection of DNS inquiries.

The DNS error resolution market — usually manifesting itself as the display of an advertising-festooned Web page when a user tries to browse to a non-existent domain — has been growing over the years, primarily thanks to ISPs who have foisted it upon their users. The feature is supported in commercial DNS software and services that target the network service provider market; in most current deployments of this sort, business customers typically have an opt-out option, and consumers might as well.

While ICANN’s Security and Stability Advisory Committee (SSAC) believes this is detrimental to the DNS, their big concern is what happens when this is done at the TLD level. We all got a taste of that with VeriSign’s SiteFinder back in 2003, which affected the .com and .net TLDs. Since then, though, similar redirections have found their way into smaller TLDs (i.e., ones where there’s no global outcry against the practice). SSAC wants this practice explicitly forbidden at the TLD level.

I personally feel that the DNS error resolution market, at whatever level of the DNS food chain, is harmful to the DNS and to the Internet as a whole. The Internet Architecture Board’s evaluation is a worthy indictment, although it’s missing one significant use case — the VPN issues that redirection can cause. Nevertheless, I also recognize that until there are explicit standards forbidding this kind of use, it will continue to be commercially attractive and thus commonplace; indeed, I continue to assist commercial DNS companies, and service providers, who are trying to facilitate and gain revenue related to this market. (Part of the analyst ethic is much like a lawyer’s; it requires being able to put aside one’s personal feelings about a matter in order to assist a client to the best of one’s ability.)

I applaud ICANN taking a stand against redirection at the TLD level; it’s a start.

Overpromising

I’ve turned one of my earlier blog entries, Smoke-and-mirrors and cloud software into a full-blown research note: “Software on Amazon’s Elastic Compute Cloud: How to Tell Hype From Reality” (clients only). It’s a Q&A for your software vendor, if they suggest that you deploy their solution on EC2, or if you want to do so and you’re wondering what vendor support you’ll get if you do so. The information is specific to Amazon (since most client inquiries of this type involve Amazon), but somewhat applicable to other cloud compute service providers, too.

More broadly, I’ve noticed an increasing tendency on the part of cloud compute vendors to over-promise. It’s not credible, and it leaves prospective customers scratching their heads and feeling like someone has tried to pull a fast one on them. Worse still, it could leave more gullible businesses going into implementations that ultimately fail. This is exactly what drives the Trough of Disillusionment of the hype cycle and hampers productive mainstream adoption.

Customers: When you have doubts about a cloud vendor’s breezy claims that sure, it will all work out, ask them to propose a specific solution. If you’re wondering how they’ll handle X, Y, or Z, ask them and don’t be satisfied with assurances that you (or they) will figure it out.

Vendors: I believe that if you can’t give the customer the right solution, you’re better off letting him go do the right thing with someone else. Stretching your capabilities can be positive for both you and your customer, but if your solution isn’t the right path, or it is a significantly more difficult path than an alternative solution, both of you are likely to be happier if that customer doesn’t buy from you right now, at least not in that particular context. Better to come back to this customer eventually when your technology is mature enough to meet his needs, or look for the customer’s needs that do suit what you can offer right now. If you screw up a premature implementation, chances are that you won’t get the chance to grow this business the way that you hoped. There are enough early adopters with needs that you can meet, that you should be going after them. There’s nothing wrong with serving start-ups and getting “foothold” implementations in enterprises; don’t bite off more than you can chew.

Almost a decade of analyst experience has shown me that it’s hard for a vendor to get a second chance with a customer if they screwed up the first encounter. Even if, many many years later, the vendor has a vastly augmented set of capabilities and is managed entirely differently, a burned customer still tends to look at them through the lens of that initial experience, and often take that attitude to the various companies they move to. My observation is that in IT outsourcing, customers certainly hold vendor “grudges” for more than five years, and may do so for more than a decade. This is hugely important in emerging markets, as it can dilute early-mover advantages as time progresses.

Job-based vs. request-based computing

Companies are adopting cloud systems infrastructure services in two different ways: job-based “batch processing”, non-interactive computing; and request-based, real-time-response, interactive computing. The two have distinct requirements, but much as in the olden days of time-sharing, they can potentially share the same infrastructure.

Job-based computing is usually of a number-crunching nature — scientific or high-performance computing. This is the sort of thing that users usually like to do on parallel computers with very fast interconnection (Infiniband or the equivalent thereof), but in the cloud, total compute time may be traded for a lower cost, and, eventually, algorithms may be altered to reduce dependency on server-to-server or server-to-storage communications. Putting these jobs on the cloud generally reduces reliance on, and scheduling for, a fixed amount of supercomputing infrastructure. Alternatively, job-based computing on the cloud may represent one-time computationally-intensive projects (transcoding, for instance).

Request-based computing, on the other hand, demands instant response to interaction. This kind of use of the cloud is classically for Web hosting, whether the interaction is based on a user with a browser, or another server making Web services requests. Most of this kind of computing is not CPU-intensive.

Observation: Most cloud compute services today target request-based computing, and this is the logical evolution of the hosting industry. However, a significant amount of large-enterprise immediate-term adoption is job-based computing.

Dilemma for cloud providers: Optimize infrastructure with low-power low-cost processors for request-based computing? Or try to balance job-based and request-based compute in a way that maximizes efficient use of faster CPUs?

“Enterprise class” cloud

There seems to be an endless parade of hosting companies eager to explain to me that they have an “enterprise class” cloud offering. (Cloud systems infrastructure services, to be precise; I continue to be careless in my shorthand on this blog, although all of us here at Gartner are trying to get into the habit of using cloud as an adjective attached to more specific terminology.)

If you’re a hosting vendor, get this into your head now: Just because your cloud compute service is differentiated from Amazon’s doesn’t mean that you’re differentiated from any other hoster’s cloud offering.

Yes, these offerings are indeed targeted at the enterprise. Yes, there are in fact plenty of non-startups who are ready and willing and eager to adopt cloud infrastructure. Yes, there are features that they want (or need) that they can’t get on some of the existing cloud offerings, especially those of the early entrants. But that does not make them unique.

These offerings tend to share the following common traits:

1. “Premium” equipment. Name-brand everything. HP blades, Cisco gear except for F5’s ADCs, etc. No white boxes.

2. VMware-based. This reflects the fact that VMware is overwhelmingly the most popular virtualization technology used in enterprises.

3. Private VLANs. Enterprises perceive private VLANs as more secure.

4. Private connectivity. That usually means Internet VPN support, but also the ability to drop your own private WAN connection into the facility. Enterprises who are integrating cloud-based solutions with their legacy infrastructure often want to be able to get MPLS VPN connections back to their own data center.

5. Colocated or provider-owned dedicated gear. Not all workloads virtualize well, and some things are available only as hardware. If you have Oracle RAC clusters, you are almost certainly going to do it on dedicated servers. People have Google search appliances, hardware ADCs custom-configured for complex tasks, black-box encryption devices, etc. Dedicated equipment is not going away for a very, very long time. (Clients only: See statistics and advice on what not to virtualize.)

6. Managed service options. People still want support, managed services, and professional services; the cloud simplifies and automates some operations tasks, but we have a very long way to go before it fulfills its potential to reduce IT operations labor costs. And this, of course, is where most hosters will make their money.

These are traits that it doesn’t take a genius to think of. Most are known requirements established through a decade and a half of hosting industry experience. If you want to differentiate, you need to get beyond them.

On-demand cloud offerings are a critical evolution stage for hosters. I continue to be very, very interested in hearing from hosters who are introducing this new set of capabilities. For the moment, there’s also some differentiation in which part of the cloud conundrum a hoster has decided to attack first, creating provider differences for both the immediate offerings and the near-term roadmap offerings. But hosters are making a big mistake by thinking their cloud competition is Amazon. Amazon certainly is a competitor now, but a hoster’s biggest worry should still be other hosters, given the worrisome similarities in the emerging services.

What makes for an effective MQ briefing?

My colleague Ted Chamberlin and I are currently finalizing the new Gartner Magic Quadrant for Web Hosting. This year, we’ve nearly doubled the number of providers on the MQ, adding a bunch of cloud providers who offer hosting services (i.e., providers who are cloud system infrastructure service providers, and who aren’t pure storage or backup).

The draft has gone out for vendor review, and these last few days have been occupied by more than a dozen conversations with vendors about where they’ve placed in the MQ. (No matter what, most vendors are convinced they should be further right and further up.) Over the course of these conversations, one clear pattern seems to be characterizing this year: We’re seeing lots of data presented in the feedback process that wasn’t presented as part of the MQ briefing or any previous briefing the vendor did with us.

I recognize the MQ can be a mysterious process to vendors. So here’s a couple of thoughts from the analyst side on what makes for effective MQ briefing content. These are by no means universal opinions, but may be shared by my colleagues who cover service businesses.

In brief, the execution axis is about what you’re doing now. The vision axis is about where you’re going. A set of definitions for the criteria on each axis are included with every vendor notification that begins the Magic Quadrant process. If you’re a vendor being considered for an MQ, you really want to read the criteria. We do not throw darts to determine vendor placement. Every vendor gets a numerical rating on every single one of those criteria, and a tool plots the dots. It’s a good idea to address each of those criteria in your briefing (or supplemental material, if you can’t fit in everything you need into the briefing).

We generally have reasonably good visibility into execution from our client base, but the less market presence you have, especially among Gartner’s typical client base (mid-size business to large enterprise, and tech companies), the less we’ve probably seen you in deals or have gotten feedback from your customers. Similarly, if you don’t have much in the way of a channel, there’s less of a chance any of your partners have talked to us about what they’re doing with you. Thus, your best use of briefing time for an MQ is to fill us in on what we don’t know about your company’s achievements — the things that aren’t readily culled from publicly-available information or talking to prospects, customers, and partners.

It’s useful to briefly summarize your company’s achievements over the last year — revenue growth, metrics showing improvements in various parts of the business, new product introductions, interesting customer wins, and so forth. Focus on the key trends of your business. Tell us what strategic initiatives you’ve undertaken and the ways they’ve contributed to your business. You can use this to help give us context to the things we’ve observed about you. We may, for instance, have observed that your customer service seems to have improved, but not know what specific measures you took to improve it. Telling us also helps us to judge how far along the curve you are with an initiative, which in turn helps us to advise our clients better and more accurately rate you.

Vision, on the other hand, is something that only you can really tell us about. Because this is where you’re going, rather than where you are now or where you’ve been, the amount of information you’re willing to disclose is likely to directly correlate to our judgement of your vision. A one-year, quarter-by-quarter roadmap is usually the best way to show us what you’re thinking; a two-year roadmap is even better. (Note that we do rate track record, so you don’t want to claim things that you aren’t going to deliver — you’ll essentially take a penalty next year if you failed to deliver on the roadmap.) We want to know what you think of the market and your place in it, but the very best way to demonstrate that you’re planning to do something exciting and different is to tell us what you’re expecting to do. (We can keep the specifics under NDA, although the more we can talk about publicly, the more we can tell our clients that if they choose you, there’ll be some really cool stuff you’ll be doing for them soon.) If you don’t disclose your initiatives, we’re forced to guess based on your general statements of direction, and generally we’re going to be conservative in our guesses, which probably means a lower rating than you might otherwise have been able to get.

The key thing to remember, though, is that if at all possible, an MQ briefing should be a summary and refresher, not an attempt to cram a year’s worth of information into an hour. If you’ve been doing routine briefings covering product updates and the launch of key initiatives, you can skip all that in an MQ briefing, and focus on presenting the metrics and key achievements that show what you’ve done, and the roadmap that shows where you’re going. Note that you don’t have to be a client in order to conduct briefings. If MQ placement or analyst recommendations are important to your business, keep in mind that when you keep an analyst well-informed, you reap the benefit the whole year ’round in the hundreds or even thousands of conversations analysts have with your prospective customers, not just on the MQ itself.

Wading into the waters of cloud adoption

I’ve been pondering the dot write-ups that I need to do for Gartner’s upcoming Cloud Computing hype cycle, as well as my forthcoming Magic Quadrant on Web Hosting (which now includes a bunch of cloud-based providers), and contemplating this thought:

We are at the start of an adoption curve for cloud computing. Getting from here, to the realization of the grand vision, will be, for most organizations, a series of steps into the water, and not a grand leap.

Start-ups have it easy; by starting with a greenfield build, they can choose from the very beginning to embrace new technologies and methodologies. Established organizations can sometimes do this with new projects, but still have heavy constraints imposed by the legacy environment. And new projects, especially now, are massively dwarfed by the existing installed base of business IT infrastructure: existing policies (and regulations), processes, methodologies, employees, and, of course, systems and applications.

The weight of all that history creates, in many organizations, a “can’t do” attitude. Sometimes that attitude comes right from the top of the business or from the CIO, but I’ve also encountered many a CIO eager to embrace innovation, only to be stymied by the morass of his organization’s IT legacy. Part of the fascination of the cloud services, of course, is that it allows business leaders to “go rogue” — to bypass the IT organization entirely in order to get what they want done ASAP, without much in the way of constraints and oversight. The counter-force is the move to develop private clouds that provide greater agility to internal IT.

Two client questions have been particularly prominent in the inquiries I’ve been taking on cloud (a super-hot topic of inquiry, as you’d expect): Is this cloud stuff real? and What can I do with the cloud right now? Companies are sticking their toes into the water, but few are jumping off the high dive. What interests me, though, is that many are engaging in active vendor discussions about taking the plunge, even if their actual expectation (or intent) is to just wade out a little. Everyone is afraid of sharks; it’s viewed as a high-risk activity.

In my research work, I have been, like the other analysts who do core cloud work here at Gartner, looking at a lot of big-picture stuff. But I’ve been focusing my written research very heavily on the practicalities of immediate-term adoption — answering the huge client demand for frameworks to use in formulating and executing on near-term cloud infrastructure plans, and in long-term strategic planning for their data centers. The interest is undoubtedly there. There’s just a gap between the solutions that people want to adopt, and the solutions that actually exist in the market. The market is evolving with tremendous rapidity, though, so not being able to find the solution you want today doesn’t mean that you won’t be able to get it next year.